AI Agent Teams: When Coding AIs Form Teams and Collaborate

The era of solo AI coding is over. Claude Code's Agent Teams lets multiple AI agents divide roles under a team leader, communicate directly, and work in parallel. From building a C compiler with 16 agents to real-world developer workflows, we analyze the state of AI collaboration.

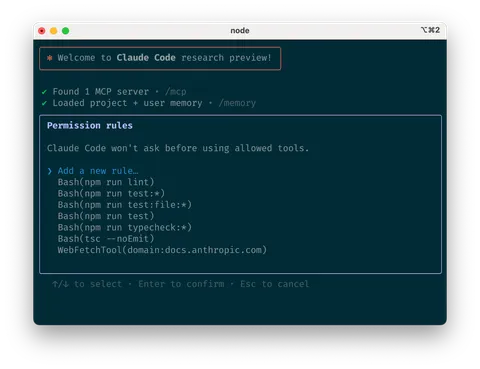

AI writing code is no longer news. But what if multiple AIs form a team, communicate with each other, and complete a project together? Anthropic's Agent Teams, introduced in Claude Code v2.1.32, turns that possibility into reality. Multiple Claude Code instances divide roles under a team leader, coordinate through a shared task list, and exchange direct messages between teammates.

In this article, we examine the architecture and mechanics of Agent Teams, the real-world case of 16 agents building a C compiler from scratch, and how it compares to competing tools.

Agent Teams Architecture: How AIs Form Teams

Agent Teams consists of four core components. The Team Lead is the main Claude Code session that creates the team, spawns teammates, and distributes work. Teammates are independent Claude Code instances that execute assigned tasks. The shared Task List tracks work through three states: pending, in_progress, and completed. The Mailbox handles inter-agent messaging.

The critical difference from subagents is the communication structure. Subagents could only report results back to the main agent. In Agent Teams, teammates can communicate directly with each other (P2P messaging). A frontend agent can ask the backend agent, "Is this API response format correct?" without going through the lead.

Subagents vs Agent Teams: When to Use Which

Agent Teams is not a strict upgrade over subagents. The two approaches serve different purposes.

Subagents run within a single session and are ideal for focused tasks where only the result matters. Token costs are lower. Agent Teams shines when teammates need discussion and collaboration. Multiple agents can simultaneously review security, performance, and test coverage in a code review, or debate competing hypotheses during debugging.

| Aspect | Subagents | Agent Teams |

|---|---|---|

| Communication | Report to main only | Direct peer-to-peer |

| Coordination | Main manages everything | Shared task list |

| Context | Results summarized back | Independent context windows |

| Best for | Simple delegation, focused tasks | Complex work requiring discussion |

| Token cost | Lower | Higher (scales with instances) |

Case Study: 16 AIs Built a C Compiler

The most dramatic demonstration of Agent Teams' potential came from Anthropic Safeguards team researcher Nicholas Carlini. He deployed 16 agents simultaneously to build a Rust-based C compiler from scratch.

Over approximately two weeks, about 2,000 Claude Code sessions ran, costing roughly $20,000 in API fees. The result was 100,000 lines of code that successfully compiled Linux 6.9 across x86, ARM, and RISC-V architectures. The GCC torture test pass rate reached 99%.

What makes this case remarkable isn't simply "AI wrote a lot of code." The key is that multiple AIs divided roles, worked in parallel, integrated each other's outputs, and produced a complete, functional piece of software without conflicts.

Custom Agents: Designing Your Own AI Team

The real power of Agent Teams lies in custom agents. Developers can define each agent's role, tool access, and model in markdown files within the .claude/agents/ directory. For example, a "security reviewer" can only read code without modification, a "frontend developer" can only modify specific directories, and a "utility" handles only builds and deployments.

Delegate mode forces the team leader to focus purely on coordination without writing code. Plan Approval requires teammates to draft a plan and receive leader approval before execution. These guardrails enable controlled parallel work even in complex projects.

Competitive Landscape: AI Coding Agent Comparison

The AI coding agent market is heating up rapidly. Where does Claude Code Agent Teams stand in this competition?

Devin aims for full autonomy but makes real-time user intervention difficult. OpenAI Codex can run multiple agents in parallel but does not support direct inter-agent communication. Cursor's Background Agents can also run parallel tasks but lack inter-agent communication.

Agent Teams differentiates itself in two ways. First, P2P direct communication enables real-time collaboration between agents. Second, a shared task list with dependency management provides structural coordination for complex workflows. However, the high token consumption presents a real barrier to entry.

| Tool | Multi-Agent | Inter-Agent Communication | Key Feature |

|---|---|---|---|

| Claude Code Agent Teams | Yes | Direct P2P | Shared task list, custom roles |

| Devin | No (single) | N/A | Full autonomy, limited user control |

| OpenAI Codex | Yes (parallel) | No | Parallel execution, worktree isolation, no inter-agent communication |

| Cursor Background Agents | Yes (parallel) | No | Parallel execution, no mutual communication |

Community Response: 'CEO of an AI Dev Team'

Developer community reaction is a mix of excitement and caution. A Reddit post with over 185 upvotes featured the analogy of feeling like "CEO of an AI dev team." Indeed, the workflow of giving natural language instructions to a team leader, monitoring each teammate's progress, and intervening when needed closely resembles a team manager's daily work.

Cost concerns are also significant. Since each teammate is an independent Claude instance, token usage scales proportionally with team size. The community consensus is forming that Agent Teams is most effective for read-heavy tasks like reviews and research.

Current Limitations and Future Direction

Agent Teams is currently in research preview with known limitations. Session resumption doesn't restore existing teammates. Only one team per session is allowed, with no nesting. Teammates cannot create their own teams.

Since its introduction in v2.1.32, updates have been rapid. v2.1.33 added TeammateIdle and TaskCompleted hooks, and v2.1.36 introduced Opus 4.6 Fast mode for improved response speed. How quickly Anthropic moves this feature to general availability will be key going forward.

The Age of AI Teamwork, Born in the Terminal

Agent Teams demonstrates that the AI coding paradigm is shifting from "one smarter AI" to "multiple AIs collaborating." A world where 16 AIs build a compiler, 5 AIs debate debugging theories, and 3 AIs conduct code reviews simultaneously. This isn't science fiction. It's happening in terminals right now.

High token costs and experimental status remain constraints, but the direction is clear. The developer's role is moving from writing code directly to designing and orchestrating AI teams. Agent Teams is the most concrete prototype of that future.

- Anthropic - Orchestrate teams of Claude Code sessions

- Anthropic - Building a C compiler with a team of parallel Claudes

- Ars Technica - Sixteen Claude AI agents working together created a new C compiler

- InfoQ - Sixteen Claude Agents Built a C Compiler Without Human Code

- The Register - Anthropic's Claude Opus 4.6 spends $20K trying to write a C compiler