Claude Launches Auto-Generated Interactive Visuals in Chat: 1M Token Context Now Standard

Anthropic delivered back-to-back major updates: interactive Visual Responses that auto-generate charts and diagrams inline on March 12, followed by the general availability of 1M token context at standard pricing on March 13, setting a new bar for AI assistants.

Anthropic dropped two major announcements in as many days. On March 12, Claude gained the ability to generate interactive charts and diagrams directly inside conversations. The very next day, the company made its 1M token context window generally available at standard pricing, no premium tier required. Together, the updates represent a significant leap in what an AI assistant can do out of the box.

Interactive Visual Responses: Charts Born in Conversation

The new 'Visual Responses' feature lets Claude automatically generate interactive charts, idea maps, structural diagrams, periodic tables, compound interest curves, timelines, and more, all rendered inline within the conversation. Unlike image generation, these visuals are built with HTML and SVG, making them clickable and interactive.

Claude decides when a visual would be helpful and generates one automatically, though users can also request them directly. The feature launched in beta on March 12 and is enabled by default for all users, including the free tier.

Visual Responses vs. Artifacts: What's Different?

Claude already had Artifacts, its side-panel tool for generating standalone code, documents, and interactive components. Visual Responses serve a fundamentally different purpose. Artifacts are persistent, live in a separate panel, and are designed for reusable outputs. Visual Responses are ephemeral, appear inline, and blend naturally into the conversation flow.

Think of Artifacts as a workbench for building things, and Visual Responses as illustrations that make explanations clearer. When you ask Claude to explain compound interest, it might render an interactive curve right in its reply. When you ask it to build a React component, that still goes to Artifacts.

1M Context GA: Standard Pricing, No Beta Header

On March 13, Anthropic made 1M context generally available. The key breakthrough is pricing: Opus 4.6 at $5/$25 per million tokens, Sonnet 4.6 at $3/$15, whether the request uses 9K tokens or 900K. No premium surcharge, no beta header required. Requests exceeding 200K tokens are handled automatically.

| Model | Input (Mtok) | Output (Mtok) | Context |

|---|---|---|---|

| Opus 4.6 | $5 | $25 | 1M tokens |

| Sonnet 4.6 | $3 | $15 | 1M tokens |

Media processing has also expanded significantly: up to 600 images or PDFs per request, up from the previous limit of 100. On benchmarks, the results are decisive. On MRCR v2 (a long-context retrieval benchmark), Claude scores 78.3%, the highest among frontier models. On the 8-needle 1M test, Opus 4.6 hits 76% compared to Sonnet 4.5's 18.5%.

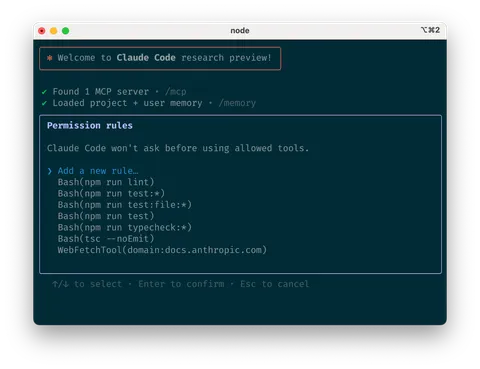

Claude Code Integration: 1M Context as Default

Claude Code v2.1.75 now uses 1M context by default for Max, Team, and Enterprise plans. For developers, this means Claude Code can process entire codebases, lengthy documentation sets, and complex multi-file projects without running into context limits that previously forced workarounds.

On SWE-bench Verified, the gold standard for coding agent evaluation, Opus 4.6 with Thinking scores 79.20%, claiming the top spot. The combination of massive context and benchmark-leading coding performance positions Claude Code as a serious contender in the AI-assisted development space.

Competitive Context: The Visualization Arms Race

Claude's Visual Responses arrive amid growing competition. OpenAI's ChatGPT has rolled out similar inline visualization capabilities, and Google's Gemini continues to push boundaries with its own multimodal features. The race to make AI assistants more visually expressive is accelerating across the industry.

However, Anthropic's 1M context pricing creates a different kind of competitive pressure. On the API side, the same per-token rate applies whether a request uses 9K or 900K tokens, with no surcharge for extended context. In Claude Code, 1M context is the default for Max ($100-$200/month), Team, and Enterprise plans, though Pro subscribers are not included. It's effectively a premium-tier default rather than a universal feature, but the absence of per-token surcharges at the API level represents a clear pricing advantage over competitors.

Setting a New Baseline

These two updates, delivered in rapid succession, signal Anthropic's intent to redefine what 'standard' means for AI assistants. Interactive visuals make Claude's responses richer without requiring users to learn new tools. 1M context with no API surcharge significantly lowers friction when working with large codebases and document sets.

The desktop-only limitation for Visual Responses and the beta label suggest there's more polish to come. But the direction is clear: Anthropic is betting that the next wave of AI adoption depends on making powerful capabilities feel effortless and accessible by default.

- Anthropic - Claude builds interactive visuals right in your conversation

- Anthropic - 1M context is now generally available

- Anthropic - Introducing Claude Opus 4.6

- 9to5Google - Claude added immersive visuals to chats

- Dataconomy - Claude Now Generates Charts And Diagrams To Explain Complex Data

- Anthropic - Claude Code Changelog