Claude Sonnet 4.6 Launches with Opus-Level Performance at Sonnet Pricing

Anthropic launched Claude Sonnet 4.6, scoring 79.6% on SWE-bench—nearly matching Opus 4.6—at 40% lower output cost. With across-the-board upgrades in coding, agents, and long-context reasoning, this model is rewriting the cost-performance equation in AI.

Anthropic officially launched Claude Sonnet 4.6 on February 18. The model significantly outperforms its predecessor Sonnet 4.5 across nearly every dimension—coding, agentic tasks, and long-context reasoning—while delivering near-Opus 4.6 performance at Sonnet pricing. It's now the default model for Free and Pro users on claude.ai and Claude Cowork.

VentureBeat called it a 'tectonic repricing event in AI.' When you can access Opus-tier capabilities at significantly lower cost, the market implications are hard to overstate.

Benchmarks: Nearly Matching Opus 4.6

Sonnet 4.6 scored 79.6% on SWE-bench Verified, just 1.2 percentage points behind Opus 4.6's 80.8%. On OSWorld-Verified, which measures computer use capabilities, Sonnet 4.6 hit 72.5%—virtually identical to Opus 4.6's 72.7%.

More notably, Sonnet 4.6 actually surpasses Opus in some areas. In design quality evaluation (GDPval-AA), Sonnet 4.6 reached Elo 1633 versus Opus 4.6's 1606. In agentic financial analysis, it scored 63.3% against 60.1%. This isn't just a 'cheaper alternative'—it genuinely outperforms the flagship in specific domains.

Coding Performance: Preferred by 70% of Claude Code Users

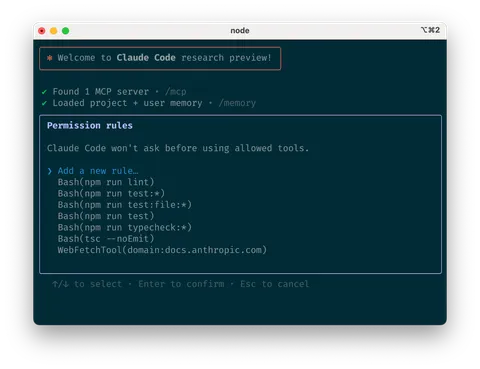

Coding improvements stand out the most. According to Anthropic, 70% of Claude Code users preferred Sonnet 4.6 over the previous Sonnet 4.5, while 59% chose it over the top-tier Opus 4.5. Beyond code generation, practical improvements include reduced over-engineering, fewer false success claims, and lower hallucination rates.

Replit praised its 'remarkable performance-to-cost ratio,' Cursor noted 'noticeable improvements across the board,' and GitHub confirmed 'outstanding performance in complex code modifications.'

Pricing and Training Data: Unmatched Value

Sonnet 4.6's base pricing is $3/MTok input and $15/MTok output—identical to Sonnet 4.5. For long-context usage beyond 200K tokens, pricing rises to $6 input and $22.50 output, but the Batch API brings it down to just $1.50 input and $7.50 output. Prompt caching reads cost only $0.30/MTok. Compared to Opus 4.6 ($5 input, $25 output), that's 40% cheaper at base output rates and 70% cheaper via Batch API.

The training data cutoff is also noteworthy. Sonnet 4.6 was trained with data through January 2026—more recent than Opus 4.6. It also supports a 1M-token context window (beta), enabling processing of large codebases and lengthy documents.

More Options at a Reasonable Price

Claude Sonnet 4.6 delivers near-Opus performance at a reasonable price with faster speed. It's well-suited for Claude Code's upcoming agent teams feature, large codebase analysis, and complex agentic workflows—broadening the practical options available. The model enables a flexible approach: default to Sonnet 4.6 for cost-efficient high performance, and reserve Opus for when it's truly needed—a welcome update for users across the board.