NVIDIA Announces NemoClaw, the Linux of Agentic AI

NVIDIA unveiled OpenClaw and NemoClaw at GTC 2026, declaring the operating system for the agentic AI era. We analyze the full-spectrum strategy spanning an open-source platform, enterprise security layer, DGX Spark hardware, and the Nemotron Coalition.

"This will be the most popular open-source project in history." That was Jensen Huang's declaration when introducing OpenClaw at GTC 2026. A turning point as big as HTML, as big as Linux. It sounds like hyperbole, but examining the architecture of OpenClaw and NemoClaw reveals the basis for that confidence. An operating system for AI agents that navigate file systems, use software tools, and execute multi-step workflows. NVIDIA is moving beyond GPUs to seize the infrastructure of the agentic AI era.

"The Open-Source OS for Agentic Computing"

OpenClaw is an open-source framework that enables AI agents to operate computers. This goes far beyond generating text. Agents navigate file systems, execute terminal commands, call APIs, and autonomously carry out complex multi-step workflows. Jensen Huang defined it as "the open-source operating system for agentic computing."

"Every company needs an OpenClaw strategy. This is the new computer." — Jensen Huang, GTC 2026

The key is accessibility. Released as open source, anyone can use OpenClaw. Just as Linux became the de facto standard for server operating systems, NVIDIA's strategy is for OpenClaw to become the de facto standard for AI agent platforms. In an era of exploding inference tokens, where agents spawn agents, NVIDIA aims to be the foundation.

NemoClaw Addresses Enterprise's Biggest Anxiety

The biggest problem with open-source agent frameworks is security. The moment you give an AI agent file system access and code execution privileges, you create risks of corporate data leaks and malicious command execution. NemoClaw is the enterprise security layer targeting precisely this concern.

| Component | Function | Effect |

|---|---|---|

| OpenShell | Process-level isolation, least-privilege access control | Strictly limits agent behavior scope |

| Privacy Router | Determines inference execution location (local vs cloud) | Prevents sensitive data from leaving the organization |

| Nemotron Integration | Native NVIDIA language model integration | Reduces external API dependency |

OpenShell isolates each agent at the process level and applies the principle of least privilege. It's a sandbox that forces agents to access only necessary files and execute only permitted commands. Privacy Router automatically determines whether to process inference requests locally or send them to the cloud. Sensitive information like medical records or financial data stays local, while general inference leverages larger cloud models.

Notably, NemoClaw is hardware-agnostic — it runs without NVIDIA GPUs. This reads as a commitment to dominating the software ecosystem rather than relying solely on GPU sales. While optimized performance on NVIDIA hardware is expected, lowering the entry barrier to grow the ecosystem is the chosen strategy.

DGX Spark: An AI Supercomputer on Your Desk

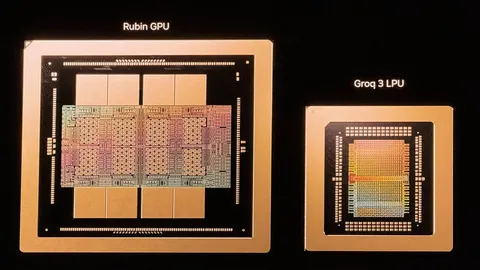

Completing NemoClaw's vision requires hardware capable of running large models locally. NVIDIA unveiled the final puzzle piece at GTC. DGX Spark is a $4,699 personal AI supercomputer with 128GB of unified memory, capable of running 100-billion-parameter models locally.

Running NemoClaw on DGX Spark creates a complete local AI agent development environment. Privacy Router processes sensitive data through local Nemotron models while routing complex inference to the cloud — a hybrid architecture. For enterprises needing more scale, DGX Station stands ready with 784GB of memory handling up to trillion-parameter models.

| Model | Memory | Price | Scale |

|---|---|---|---|

| DGX Spark | 128GB unified | $4,699 | 100B parameters |

| DGX Station | 784GB | TBA | 1T parameters |

NVIDIA Rallies Open Model Alliance Through Nemotron Coalition

NVIDIA's strategy doesn't end with hardware and software. The Nemotron Coalition is an open frontier model alliance of eight AI research labs. Perplexity, Black Forest Labs, Cursor, LangChain, and Mistral AI are among the participants. They jointly train models on NVIDIA's DGX Cloud and release the results as open source.

The alliance's significance extends beyond technical collaboration. NVIDIA provides DGX Cloud computing resources, while participating labs advance models in their respective specialties. LangChain excels in agent orchestration, Cursor in coding agents, and Perplexity in search reasoning. As they optimize Nemotron-based models for their domains, the entire NemoClaw ecosystem's competitiveness rises. If OpenClaw is Linux, the Nemotron Coalition is its Linux Foundation.

From GPU Company to AI Platform Enterprise

The launch of NemoClaw and OpenClaw symbolizes NVIDIA's identity transformation — from a semiconductor company selling GPUs to an enterprise defining the platform for the agentic AI era. A triple structure: open-source framework to spread the ecosystem, enterprise security layer to generate revenue, and hardware plus model coalition to solidify the ecosystem.

As with Linux, the real battle is won not by the technology itself but by the ecosystem. Whether NVIDIA can capture the operating system of agentic AI depends on how many developers adopt OpenClaw. What's clear is that the era where agents spawn agents and autonomously use tools has already begun. NVIDIA has declared its intention to lay the foundation for that era.

- TechCrunch - Nvidia's version of OpenClaw could solve its biggest problem: security

- CNBC - Nvidia open-sources AI agent platform NemoClaw with agentic tools

- SiliconANGLE - Nvidia launches NemoClaw agent toolkit to enhance AI agents

- NVIDIA Newsroom - NVIDIA Announces DGX Spark and DGX Station Personal AI Computers

- CNN - Nvidia Jensen Huang AI agents