Gemini 3.1 Pro Launch: A SOTA-Level Leap in Reasoning and Multimodal AI

Google has unveiled Gemini 3.1 Pro, demonstrating dominant reasoning performance with a 77.1% score on ARC-AGI-2 and cutting hallucination rates from 88% to 50%. However, long-context retention and search accuracy remain areas for improvement.

On February 19, Google unveiled Gemini 3.1 Pro (Preview), building on November's Gemini 3 Pro with notable performance gains across nearly every benchmark in reasoning, coding, and science. Most impressively, it scored 77.1% on the multimodal and reasoning benchmark ARC-AGI-2, more than doubling the previous version's result.

It also claimed the top spot in the Artificial Analysis Intelligence Index v4.0 with 57 points, surpassing Claude Opus 4.6 (53 points) and GPT-5.2. What makes this particularly noteworthy is that Google achieved this at less than half the cost of competing models. The model is available as a public preview on Google AI Studio, Gemini CLI, and Vertex AI.

Benchmark Results: A Dominant Lead in Reasoning and Coding

Gemini 3.1 Pro's most impressive achievements came in reasoning benchmarks. It scored 77.1% on ARC-AGI-2, which measures multimodal and reasoning ability, significantly outperforming Claude Opus 4.6 (68.8%) and GPT-5.2 (52.9%). It also set a new high of 44.4% on Humanity's Last Exam.

Coding performance saw similar gains. It reached an Elo of 2887 on LiveCodeBench Pro, up nearly 450 points from its predecessor (2439), and topped Terminal-Bench 2.0 at 68.5%. On SWE-Bench Verified, it scored 80.6%, virtually tied with Claude Opus 4.6 (80.8%).

In the science domain, it achieved 94.3% on GPQA Diamond, outperforming all competitors. JetBrains described it as showing "15% quality improvement, stronger, faster, and more efficient," while Databricks reported best-in-class performance on their OfficeQA benchmark.

Multimodal Vision Strengths and Hallucination Reduction

Gemini 3.1 Pro also demonstrated consistent strengths in multimodal capabilities. It scored 80.5% on MMMU-Pro, surpassing GPT-5.2 (79.5%) and Claude Opus 4.6 (73.9%). Cartwheel noted a significant improvement in 3D transformation understanding. The community consensus is that the model holds a clear advantage in image and document analysis, as well as vision-based coding.

Perhaps more noteworthy is the improvement in hallucinations. On the AA-Omniscience test, the hallucination rate dropped from 88% to 50%, a 38 percentage point reduction. Google explained this not as "knowing more" but as "being honest about what it doesn't know." Rather than fabricating answers, the model now admits uncertainty.

On BrowseComp, which measures search and retrieval capabilities, it scored 85.9%, narrowly edging out Claude Opus 4.6 (84.0%). Overall performance on complex tasks combining search and reasoning has seen broad improvement.

Remaining Challenges: Long Context and Search Reliability

Despite impressive benchmarks, real-world usage still reveals some shortcomings. The most frequently cited issue in the community is long-context retention. While it officially supports 1 million input tokens, consistent feedback indicates noticeable performance degradation beyond 300,000 tokens. Users also report the model forgetting earlier context in extended conversation sessions. This is particularly critical for coding, where maintaining context memory across large codebases is essential.

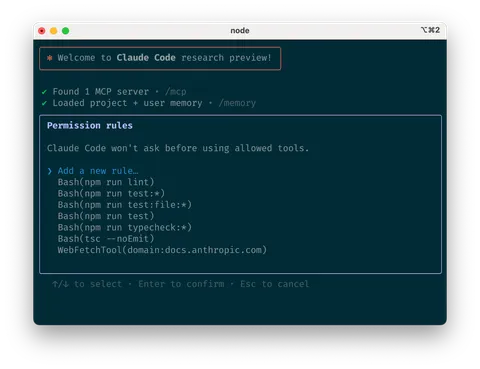

The coding tool ecosystem also presents challenges. While benchmark scores place it at the top tier for coding, what ultimately shapes the developer experience goes beyond raw model performance. Compared to mature coding-specific tools like OpenAI's Codex and Anthropic's Claude Code, Gemini CLI is still in its early stages. Without robust tooling and seamless development workflow integration, benchmark superiority may not translate into real-world competitive advantage.

Search functionality isn't fully reliable either. Despite high BrowseComp scores, real-world usage still encounters cases where the model fails to retrieve recent information. There are also reports of hallucinations persisting when used without Deep Think (extended reasoning) mode. Some users have also pointed out session stability issues and an occasional tendency to produce "lazy" responses.

Reclaiming the SOTA Crown, but Not Yet Complete

Gemini 3.1 Pro is undeniably one of the most powerful AI models available today. It claimed first place in 6 out of 10 major benchmark categories spanning reasoning, coding, science, and multimodal tasks, with particularly meaningful progress in abstract reasoning and hallucination reduction. When factoring in price-to-performance, its competitiveness becomes even more compelling.

However, challenges remain in real-world areas including long-context retention, search reliability, and session persistence. How quickly Google can narrow the gap between benchmark supremacy and actual user experience will be crucial for the official release. The three-way race between Claude Opus 4.6, GPT-5.2, and now Gemini 3.1 Pro has made the AI model competition more intense than ever.

- Google Blog - Gemini 3.1 Pro: Our most capable model yet

- VentureBeat - Google launches Gemini 3.1 Pro, retaking AI crown with 2x reasoning

- Ars Technica - Google announces Gemini 3.1 Pro, says it's better at complex problem solving

- OfficeChai - Google Gemini 3.1 Pro takes top spot in Artificial Analysis Intelligence Index