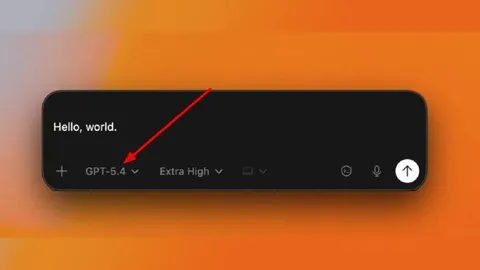

GPT-5.4 Launches to Mixed Reactions: Leading in Computer Use, Falling Behind in Coding

OpenAI launched GPT-5.4 with superhuman computer use capabilities, but falls behind Claude Opus 4.6 in key coding benchmarks and didn't even publish SWE-bench Verified results. Once the undisputed leader, where does OpenAI stand now?

On March 5, 2026, OpenAI officially launched GPT-5.4. Available in three variants: the base model, Pro, and Thinking, it features native computer use capabilities and a 1M token context window. GPT-5.4 is undeniably impressive. But it's a far cry from a year ago, when OpenAI swept every benchmark in sight.

OpenAI once again showcased dazzling benchmark numbers, yet conspicuously absent was the most prominent coding benchmark: SWE-bench Verified. Outpaced by Claude Opus 4.6 in coding and Gemini 3.1 Pro in reasoning, GPT-5.4 shows clear strengths in agentic and computer use tasks, but the title of 'undisputed champion' is already a relic of the past.

1. GPT-5.4 Key Features: Native Computer Use and Tool Search

The most notable new feature of GPT-5.4 is native computer use. Built on Playwright, it can read screenshots, execute mouse and keyboard commands, and automate tasks across multiple applications. OpenAI describes it as "the first general-purpose model with native computer use capabilities."

On the OSWorld-Verified benchmark, GPT-5.4 scored 75.0%, surpassing the human performance baseline of 72.4% and a massive leap from GPT-5.2's 47.3%. This means AI can now perform desktop tasks more accurately than humans.

The second major feature is Tool Search. Previously, all tool definitions had to be included in the prompt, but Tool Search enables on-demand loading of only the necessary tools, reducing token usage by 47%. For developers, this means simultaneous cost savings and efficiency gains.

Additional improvements include a 1M token context window (2x pricing above 272K tokens), 33% reduction in hallucinations, and 18% fewer response errors overall. On GDPval, a professional task benchmark, GPT-5.4 scored 83.0%, significantly outperforming the previous model's 70.9%.

2. SWE-bench Verified Omission: Cherry-Picking Favorable Benchmarks?

The most controversial aspect of the GPT-5.4 announcement was the absence of SWE-bench Verified results. The benchmark has been the gold standard for measuring AI coding ability, with every major AI company competing on it. Yet OpenAI chose not to publish GPT-5.4's scores.

There's a backstory. On February 23, 2026, OpenAI declared it would discontinue evaluation on SWE-bench Verified. Their internal audit found that 59.4% of the audited test cases were flawed, rejecting functionally correct submissions. They also flagged training data contamination, noting that all frontier models could reproduce the original human-written bug fixes used as ground truth.

Instead, OpenAI only published results on SWE-bench Pro, developed by Scale AI. The problem? The scores are underwhelming. GPT-5.4 scored 57.7% on SWE-bench Pro, a mere 0.9 percentage point improvement over GPT-5.3-Codex's 56.8%. The gap with Claude Opus 4.6, which holds 80.8% on SWE-bench Verified, remains stark.

Critics argue this is textbook cherry-picking: abandoning a benchmark that's unfavorable and migrating to one that tells a better story. While the structural issues with SWE-bench Verified are real, the suspicious timing of announcing its retirement precisely when OpenAI's standings were weakest has not gone unnoticed.

3. The SOTA Race: No Single Winner Across All Benchmarks

As of March 2026, the AI benchmark landscape is completely fragmented. No single model dominates every category. In coding, Claude Opus 4.6 holds a firm lead with 80.8% on SWE-bench Verified. In reasoning, Gemini 3.1 Pro leads with 94.3% on GPQA Diamond.

GPT-5.4's strengths lie in agentic and computer use tasks. It surpassed human performance with 75.0% on OSWorld-Verified and led the Toolathlon agent benchmark at 54.6%, well ahead of Claude at 44.8%. It also excels in professional tasks with 83.0% on GDPval.

On the challenging ARC-AGI-2 reasoning benchmark, GPT-5.4 Pro scored 83.3%, ahead of Gemini 3.1 Pro (77.1%) and Claude Opus 4.6 (75.2%). However, this score comes from the most expensive Pro variant, which is inaccessible to most users.

The bottom line: coding belongs to Claude, reasoning to Gemini, and agentic/computer use to GPT-5.4. A three-way split has emerged. "No single winner across all benchmarks" is the current reality of the AI industry.

4. The Undisputed Leader of a Year Ago: Where Did It Go?

Remember the GPT-4o era? In mid-2024, OpenAI held the undisputed top spot across virtually every benchmark. Coding, reasoning, creative tasks, tool use: ChatGPT was the reference point, and competitors chased its shadow.

Then Anthropic evolved rapidly, from Claude 3.5 Sonnet through Claude 4 to Opus 4.6, successfully overtaking OpenAI in coding. Google closed the gap with Gemini 2.0 through 3.1 Pro, catching up in reasoning and cost efficiency. As of 2026, OpenAI has either lost the lead or is competing by razor-thin margins in every domain.

A deeper problem is credibility. There has been persistent criticism about the lack of independent verification for OpenAI's self-reported benchmark figures. The pattern of retiring SWE-bench Verified when it became unfavorable, combined with apparent benchmark selection bias, has cast doubt on the numbers themselves. Gizmodo described this launch as "OpenAI in desperate need of a win."

Closing Thoughts: After Code Red, an Unproven Throne

Since the internal "Code Red" triggered by Google's Gemini announcement in late 2023, OpenAI has been in a perpetual state of catch-up. The company shipped GPT-5 series models from 5.0 through 5.1, 5.2, 5.3, and now 5.4 in just seven months, yet never managed to reclaim SOTA across every category.

GPT-5.4 demonstrates genuine strengths in computer use and agentic tasks. Surpassing human performance on OSWorld is a real achievement. But falling behind Claude on core coding benchmarks, and not even publishing SWE-bench Verified results, is tantamount to conceding the weakness.

The AI model race is no longer a game fought over a single throne. A multipolar era has arrived, with different models leading in coding, reasoning, agentic tasks, and professional work. The challenge ahead for OpenAI is clear: leverage GPT-5.4's agentic strengths while closing the gap in coding and reasoning. The road back to undisputed number one still looks long and uphill.

- OpenAI - Introducing GPT-5.4

- TechCrunch - OpenAI launches GPT-5.4 with Pro and Thinking versions

- Gizmodo - OpenAI in Desperate Need of a Win, Launches GPT-5.4

- The Decoder - OpenAI wants to retire the AI coding benchmark that everyone has been competing on

- DigitalApplied - GPT-5.4 vs Opus 4.6 vs Gemini 3.1 Pro: Best Frontier Model

- Every.to - Vibe Check: OpenAI Is Back

- IsItGoodAI - GPT-5.4 Review