GPT-5.3 Instant: How OpenAI Made a Less Cringe AI

OpenAI released GPT-5.3 Instant on March 3 as the default free-tier model, cutting disclaimers and hallucinations by 26.8%. But declining safety scores and paid-user frustration over consecutive Instant-only updates have pushed OpenAI toward a GPT-5.4 announcement.

OpenAI released GPT-5.3 Instant on March 3. It immediately became the default conversation model for free-tier ChatGPT users, accessible via the API as gpt-5.3-chat-latest. The update's core mission boils down to a single phrase: "less cringe."

What qualifies as cringe? The disclaimers appended to every answer ("but I'm an AI, so please consult a professional"), the excessive refusals triggered by harmless questions, and the preachy moral lectures nobody asked for. OpenAI targeted all three systematically.

1. Key Changes in GPT-5.3 Instant: Reworking the Tone

The biggest shift in GPT-5.3 Instant is the response tone. OpenAI zeroed in on the three patterns users complained about most. First, the unnecessary disclaimers tacked onto every answer. Second, the overzealous refusal reflex that fired even on innocuous questions. Third, the unsolicited moral context that turned responses preachy.

According to TechCrunch, GPT-5.3 Instant also dramatically reduced passive-aggressive phrases like "please calm down." When a user asks a question while frustrated, the model now answers the request directly rather than trying to manage emotions. OpenAI describes this as a "model personality" level adjustment, emphasizing it was a fundamental change at the training stage, not a simple prompt tweak.

2. Hallucination Benchmarks: 26.8% Improvement in High-Risk Fields

Tone improvement is only part of the story. GPT-5.3 Instant also posted meaningful gains on hallucination metrics. According to OpenAI's published benchmarks, hallucination rates dropped 26.8% in high-risk queries (medical, legal, financial) when paired with web search, and 19.7% without web search.

User-reported error rates improved as well: 22.5% lower with web search and 9.6% lower without. VentureBeat noted that these figures suggest "OpenAI is starting to measure hallucination through real-usage feedback loops, not just benchmarks."

| Metric | With Web Search | Without Web Search |

|---|---|---|

| High-risk hallucination rate reduction | 26.8% | 19.7% |

| User-reported error rate reduction | 22.5% | 9.6% |

3. Safety Guardrail Scores Drop: The Debate and OpenAI's Response

However, some of GPT-5.3 Instant's safety metrics declined. Sexual content filtering accuracy fell by 6.0 percentage points, and violent content filtering dropped by 7.1 percentage points. The gap appears to stem from a discrepancy between offline evaluations and online testing.

OpenAI stated it is "investigating the discrepancy between offline evals and online tests." In other words, internal testing showed safety levels holding steady, but the live deployment environment produced different results. The company said it would continue monitoring the metrics and evaluate follow-up measures.

4. The GPT-5.3 Series Controversy: Two Coding Models in a Row

GPT-5.3 Instant is the third model in the GPT-5.3 series. GPT-5.3 Codex (code generation) launched February 5, Codex-Spark (lightweight coding model) followed February 12, and the general conversation model Instant didn't arrive until March 3. The issue was the release order. Two coding-specialized models shipped back to back, while the general conversation model that most users actually rely on daily was pushed back by nearly a month.

"Do non-developers even matter to OpenAI?" became a common refrain in community forums. The frustration was amplified by the fact that both Codex and Codex-Spark are paid-subscriber-only coding models. For the majority of paying users who don't code, it meant nearly two months without a meaningful update. GPT-5.2 Instant remains available as a legacy model until June 3, but that has done little to dispel the perception that general users were deprioritized.

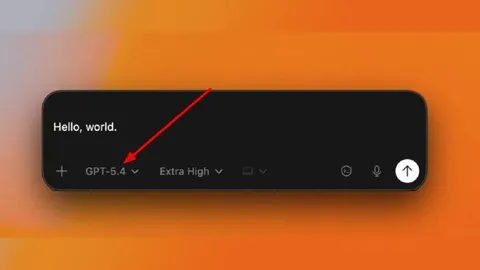

5. GPT-5.4 Teased and the Gemini 3.1 Flash Factor

As general-user frustration grew, OpenAI signaled that GPT-5.4 is imminent. No official announcement has dropped yet, but references to GPT-5.4 leaked twice from OpenAI's public Codex GitHub repository. A February 27 PR set the minimum model version for full-resolution vision support to (5, 4), and a March 2 PR contained a "toggle Fast mode for GPT-5.4" reference. Both were scrubbed within hours.

The coding-model bias also reads as a competitive response. Google's Gemini 3.1 Flash has been delivering strong performance in general conversation, pressuring OpenAI to rapidly close the gap in everyday user experience. The rushed Instant release appears to be a direct answer to that competitive threat.

Conclusion: Why AI's Tone Matters

The core story of GPT-5.3 Instant is not a benchmark race. It is OpenAI acknowledging how much the way AI speaks shapes user experience. Remove unnecessary disclaimers and preachy language, and users trust the AI more and use it more often.

But three challenges are stacked on top: declining safety guardrails, general users alienated by a coding-model-first rollout, and a conversation-model race with Gemini. Making AI "less cringe" and making it "less safe" are clearly different problems, and prioritizing coding models while sidelining general users is not a sustainable strategy. How OpenAI answers all of these with GPT-5.4 is the real story to watch.