GPT-5.4 One Week Later: How Does the Industry Rate It?

One week after GPT-5.4's launch, industry reactions are split between 'OpenAI is Back' enthusiasm and 'just catching up' skepticism. Sam Altman himself admitted three weaknesses. A comprehensive look at benchmarks, competition, and Korean language improvements.

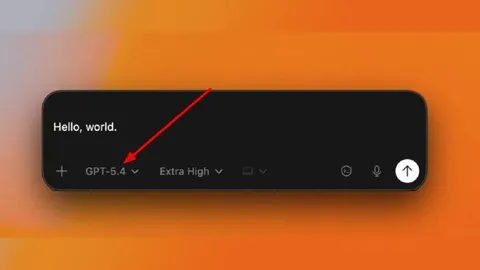

It has been one week since GPT-5.4 launched on March 5, 2026. Released in three variants: the base model, Thinking, and Pro, it ships with native Computer Use, Tool Search, and a 1-million-token context window among its headline features. Industry reactions immediately split into two camps. Every.to ran the headline "OpenAI is Back," while Stephen Smith drew a clear line: "It's good. But it's not ahead."

Now that the initial excitement has settled, more measured assessments are emerging. Drawing on benchmark numbers, real-world user feedback, and Sam Altman's own remarks, here is where GPT-5.4 actually stands.

Key New Features and Benchmark Results

GPT-5.4's most eye-catching new feature is native computer use. Built on Playwright, it reads screenshots, controls mouse and keyboard inputs, and automates tasks across multiple applications. On OSWorld, it scored 75.0%, surpassing the human baseline of 72.4% for the first time ever. This proves that AI can now perform desktop tasks more accurately than humans.

The second notable feature is Tool Search. Instead of stuffing all tool definitions into the prompt, Tool Search loads only the necessary tools on demand, cutting token usage by 47%. For developers, it delivers cost savings and efficiency gains simultaneously. A new financial plugin also signals expansion into the enterprise domain.

The benchmark numbers are equally impressive. GPT-5.4 scored 83.0% on GDPval, which measures professional tasks across 44 occupations, and 73.3% on ARC-AGI-2 (83.3% for the Pro variant). Hallucination error rates dropped 33% individually and 18% overall. The context window extends to 1 million tokens with a maximum output of 128K tokens.

"OpenAI is Back" vs "Just Catching Up": Divided Reactions

Leading the positive camp are endorsements from prominent AI figures. AI developer Matt Shumer called it "the best model in the world, bar none." Cloudflare shared a case study where a single engineer rebuilt Next.js in one week using just $1,100 in API costs. Every.to declared OpenAI's triumphant return with its headline "GPT-5.4: OpenAI Is Back."

The skeptical side is equally vocal. Stephen Smith stated plainly: "It's good. But it's not ahead." The model still shows gaps in common-sense reasoning, and excessive loosening has led to reports of confabulation and prompt leakage. Some users have even expressed nostalgia for the GPT-4o era. The critical assessment boils down to: "A flashy comeback, yes. A throne reclaimed, no."

Three Weaknesses Sam Altman Admitted

What stands out is that Sam Altman publicly acknowledged GPT-5.4's limitations. He identified three specific weaknesses.

First, design sensibility. He admitted GPT-5.4 lags behind Claude and Gemini in UI/UX design and visual output generation. Second, real-world context understanding. Beyond simple text processing, the model needs improvement in comprehending and reflecting the complexity of real-world situations. Third, complex task completion. The ability to see multi-step, long-running tasks through to completion remains insufficient.

It is unusual for a CEO to publicly admit weaknesses. This can be read as a display of transparency, or as evidence that competition has grown too fierce to hide shortcomings any longer. Either way, it is clear that OpenAI views GPT-5.4 as a transitional product rather than a finished one.

Competitive Landscape: The Three-Way Race

As of March 2026, the frontier AI model competition is a full three-way race. Claude Opus 4.6 holds the undisputed lead in coding with 80.8% on SWE-bench, excelling in complex reasoning and long-form code generation. Gemini 3.1 Pro has established itself as the best value model, combining $2 input and $12 output pricing with a strong 80.6% on SWE-bench.

GPT-5.4 holds clear advantages in agentic and computer use tasks, but trails Claude in coding and Gemini in cost-effectiveness. Its API pricing of $2.50-$5.00 input and $15-$20 output (Pro at $30 input, $180 output) is not exactly cheap. The industry consensus is clear: "GPT-5.4 has returned to parity. But it has not taken the lead."

Korean Language Performance: A Night-and-Day Improvement

In the Korean market, GPT-5.4's biggest talking point is its dramatically improved Korean language performance. Users describe it as a "night-and-day difference" compared to GPT-5, with significant improvements in Korean comprehension and generation. It achieved perfect scores across all subjects on the Korean CSAT (Suneung). The awkwardness and mistranslation issues that plagued previous versions have been largely resolved.

Domestic industry reactions are noteworthy. AI Times ran a piece on how GPT-5.4's agentic capabilities herald "the dawn of the AI employee era." NC AI's Kim Geun-gyo, however, noted that reactions have been "more subdued than expected" and emphasized the need for sovereign AI companies. As dependency on global AI models grows, securing domestic AI capabilities becomes increasingly important.

One Week Verdict: A Return, Not a Reclamation

One week after GPT-5.4's launch, industry assessments are converging. OpenAI is back. But it has not returned as number one. The superhuman achievements in computer use and agentic tasks are real. Yet falling behind Claude in coding and Gemini in reasoning, with the CEO himself admitting weaknesses, paints a picture markedly different from the OpenAI of a year ago.

The frontier AI race is no longer a game fought over a single throne. It has entered a multipolar era where models with different strengths coexist. GPT-5.4 is the model that reclaimed OpenAI's seat at the table, not the model that reclaimed the crown. What comes next depends on how reliably GPT-5.4 performs in real-world production environments and how quickly the weaknesses Altman acknowledged are addressed.