GPT-5o?: OpenAI Rumored to Be Developing Next-Gen Multimodal Omni Model

Rumors have emerged that OpenAI is developing a 'truly omni model' capable of inputting and outputting text, images, video, and audio. The claim gains credibility as two OpenAI employees have engaged with the leak from API monitoring expert legit_api.

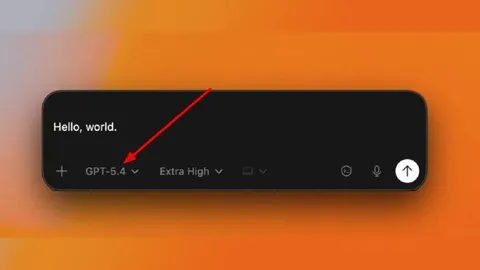

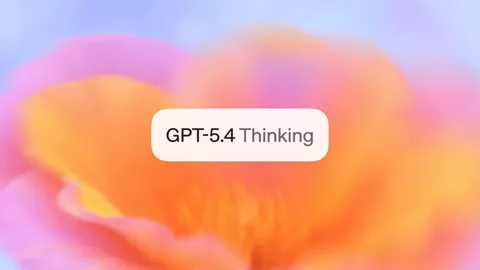

Just four days after the GPT-5.4 launch, a rumor hinting at OpenAI's next move has surfaced. @legit_api, a verified X account known for monitoring API endpoints and web app code, posted that "OpenAI is developing a new Omni model." Two OpenAI employees then engaged with the thread, lending the claim more weight than mere speculation. Could the fully multimodal input-output experience that GPT-4o promised but never delivered finally be on the horizon?

The Source: Who Is legit_api?

The rumor originates from @legit_api (Legit), a verified X account. Not an OpenAI insider, but an external specialist who monitors API endpoints and web app code. The account gained recognition in the AI community for immediately attempting to access API endpoints ahead of the GPT-5.4 launch to confirm the model's existence.

The key claims from legit_api are as follows: OpenAI is developing a new omni model that is fully multimodal. It can accept text, images, video, and audio as input, with output presumably being any combination of the above. legit_api described it as "what the original GPT-4o was supposed to be, but never was."

OpenAI Employee Reactions Boost Credibility

What decisively elevated the rumor's credibility was the reaction of two OpenAI employees.

The first is Brandon McKinzie (@mckbrando), a Member of Technical Staff at OpenAI. He's a key contributor to the o1 and o3 models and the developer of "Thinking with Images," the image reasoning capability in o3 and o4-mini. A multimodal specialist who came from Apple where he worked on the MM1 model, McKinzie recently stated that "This is just the beginning. The team is already hard at work on the next generation of models" and engaged with the omni model thread.

The second is Houda Nait El Barj (@Houda_nait), a Research Lead at OpenAI who heads "Experience Research on Emerging AI Systems." She has been involved in the Jony Ive/LoveFrom hardware project and Operator development. A Stanford economics Ph.D., she replied to the thread with "Coming soon!!" — a statement that strongly suggests awareness of an internal roadmap.

GPT-4o's Unfulfilled Promise: A Second Attempt After Two Years

Understanding this rumor requires revisiting GPT-4o's history. Launched in 2024, the "o" in GPT-4o stood for "omni." It was marketed as a truly multimodal model spanning text, images, and audio, but in practice fell short of full multimodal input-output. Image and audio output were limited, and video input-output wasn't supported at all.

The subsequent GPT-5 series (5.0, 5.1, 5.2, 5.3, 5.4) iterated rapidly but the situation hasn't fundamentally changed. Even GPT-5.4, launched just four days ago, accepts text and image inputs but only outputs text. Despite its massive 1-million-token context window and powerful reasoning capabilities, native audio output and video processing remain absent.

In other words, two years have passed since GPT-4o branded itself as "omni," yet OpenAI has still not delivered a model that freely handles input and output across all modalities. If this rumor proves true, it could represent the fulfillment of a promise deferred for two years.

The Connection to New Audio Model Reports

This omni model rumor doesn't exist in isolation. In January 2026, SiliconANGLE reported that OpenAI planned to launch a new audio model architecture within Q1. Combining this report with the omni model rumor suggests OpenAI may be preparing a dedicated multimodal model line separate from the text-centric GPT-5 series.

McKinzie's background supports this interpretation. He developed MM1, a multimodal large language model at Apple, before joining OpenAI, where he implemented image reasoning capabilities in o3 and o4-mini. Processing text, images, audio, and video within a single model aligns precisely with his area of expertise.

Community Reaction and Spread

The rumor's spread is currently limited. On X, legit_api's original tweet has garnered approximately 19,300 views, with reactions primarily from the AI community. In Korea, it attracted attention on DC Inside's Singularity Gallery with roughly 3,600 views, 26 upvotes, and 29 comments. However, it hasn't been picked up by Reddit or major tech outlets yet, placing it firmly in the early-stage rumor category.

Community sentiment is a mix of excitement and skepticism. Given GPT-4o's precedent, some argue they "won't believe it until full multimodal is actually delivered," while others point to the direct engagement from OpenAI employees as grounds for optimism that "this time might be different."

The Homework GPT-4o Left Behind: Can They Deliver After Two Years?

Here's what we know so far: a credible API monitoring expert reported the omni model's development, a multimodal specialist at OpenAI engaged with the thread, and a Research Lead responded with "coming soon." Separate reporting about a new audio model architecture points in the same direction.

However, all of this remains a combination of rumors and circumstantial evidence rather than an official announcement. The release timeline, model name (whether it will be GPT-5o or a separate brand), and specific feature scope are entirely unconfirmed. Whether the fully multimodal input-output that GPT-4o promised two years ago will finally be realized this time remains to be seen. OpenAI's next move deserves close attention.

- X (legit_api) - OpenAI is developing a new Omni model

- X (Brandon McKinzie) - Brandon McKinzie - OpenAI Member of Technical Staff

- X (Houda Nait El Barj) - Houda Nait El Barj - OpenAI Research Lead

- OpenAI - Introducing GPT-5.4

- TechCrunch - OpenAI launches GPT-5.4 with Pro and Thinking versions

- SiliconANGLE - Report: OpenAI plans to launch new audio model in first quarter